My history with builds

Over the years I, like many, have been exposed to various ways to build and deploy .NET code. In 2001 I was building locally without versioning and using FTP to push the web site changes. Libraries we depended on were committed to source control. By 2007 i was using MSBuild to create Clickonce WPF deployments. This time we had a build server, CruiseControl.NET, to version and execute the compilation. This was a load of work. Whenever someone had to change the build or deploy process they had to brush up on their MSBuild/ClickOnce skills by grabbing one of the heavily thumbed books around the office.

Roll on 2011 and TeamCity was the build server du jour. By now I had seen various versioning, dependency management and build strategies. Out of the box, MSBuild didn’t allow you to specify the version of the build artefact from the command line. Scripts or custom MSBuild ‘Tasks’ were required. Libs were still committed to source control.

Nuget came out, and we finally had a dependency management platform for the .NET ecosystem. I personally found that PowerShell to be the most effective build tool at this point in time. While I find PowerShell to have a cumbersome and un-intuitive syntax, it does has access to the full power of the .NET Framework while still being a scripting language. This put it leagues ahead of MSBuild custom Tasks and CMD/Batch scripts. PSake came along to give a nice build-script flavour to PowerShell builds.

So up until recently, Psake/PowerShell was my uncomfortable default tooling to use for build scripts. With Roslyn finally becoming mature, we now could have C# as a scripting language. Well, to be fair, LinqPad (with it’s command line tool LPRun.exe) already gave us this. Then comes along Cake as a build tool. C# build scripts! Finally!

But what about dotnet cli and the most recent MSBuild? Hasn’t the landscape changed again with the csproj project format changing to xproj, then to project.json then back to a new csproj format?

Well currently in my office we have 4 styles of build tools:

- Compiled .NET executables

- PowerShell scripts

- Cake scripts

- other odds and sods.

We also have On-Premise TeamCity, Cloud based TeamCity and the new favourite is AppVeyor (no more snowflake build agents!). That is a lot of combinations of tooling. Diversity is good, but the occasional taking stock or consolidation can be good too.

What I want out of a build script

To figure out what I want out of a build tool, I recently tried to take stock of the current options. My focus is on just building .NET libraries. Not deploying websites, Docker containers, applications etc. My ideal script would be as simple as four steps:

- Set the version for the assembly and package

- Compile the code in Release configuration (optimisations on), with XML docs and symbols

- Test the compiled code

- Package the artefacts with the specified version.

I want to use scripts. I don’t want to have compiled build tools. I have been there before and it added unnecessary friction. A change to the build script meant building the tooling (and testing, versioning and deploying it), then I was able to build my actual project I was working on. This increases my feedback loop, and increases my frustration 😉

The next thing I want is to reduce the binary files that are checked into my source control repository. I know some people check in the Nuget.exe or their test runner. No thanks, not for me. I would expect most dev machines and build servers to have Nuget on their path. If they don’t have it, or have a different version, then we can just download it to a local folder (git ignored) and use it from there. I find this the least hostile thing to do. Ideally test runners are part of a package dependency too, so will just be available after a restore/build. Subsequent restores shouldn’t even hit the network as the package hopefully is cached on disk.

Lastly I want to be able to multi-target my packages. These packages should be able to be consumed by older .NET 4.5.2 applications, ideally by UWP/UWA, and the newer .NET 4.7 and .NET Core applications.

Psake

In early 2016, I had an open source project that I wanted a build script for. Tooling seemed to change every 10 weeks at the time due to the churn on the .NET Core movement. So I thought to myself, why don’t I just cheat and copy what the guns do? At the time NewtonSoft’s JSON.NET was the most widely downloaded and supported Nuget package available, and the build script was open source. Copy+Paste here I come.

The script was fully featured, well written, but far from simple. I took it, picked it to pieces, and made it work for me. It worked really well in the end. We used those bones for other projects at work. Other devs including the grads were able to maintain/update it. However it was still a lot of code for those 4 steps I want. Using PSake did give it a modular feel, and tasks were broken down into small methods. But…..PowerShell. Like nails down a blackboard.

Here is an example of the style I had adopted : https://github.com/HdrHistogram/HdrHistogram.NET/blob/6d112203aa62a81f8434b599df46998e24ce26f5/build/build.ps1

It worked, it hit all my requirements (no libraries or tools in source control, scripted, capacity to multi-target). However, at over 300 lines long it was not ideal.

Cake

Cake is becoming popular in the office. I personally like the idea of a C# scripted build. However, I have held off picking this one up.

It looks good. Really good. It has built in support for the standard test runners, code quality addins, hooks for TFS, Vagrant, Yeoman and loads of other things I don’t need.

However, my first concern; to kick it off you run a power shell script. I was evaluating cake so I didn’t have to use PowerShell, the irony. Next, it seems to be a more fully featured tool than just a build script. This appears to do deployments too. So maybe for an Application build tool this could be great. The last concern I had was, it was actually pretty verbose.

Task("A")

.Does(() =>

{

});

Task("B")

.IsDependentOn("A")

.Does(() =>

{

});

RunTarget("B");

The example above, actually does nothing. It serves to illustrate how the dependency chain works, but it literally does nothing, and in 10 lines of code. Hmm.

Now I see some real power with this Cake tool, however for building libraries, it feels like a pretty big hammer.

dotnet cli

The dotnet command line interface, and the whole .NET Core journey for me has been unpleasant. In mid/late 2015 a colleague and I tried to make a port of an open source library to .NET Core. By the time we finished understanding how it worked and getting it working, everything changed. Hey it was beta, we hung our heads, but knew that it was probably going to end up this way. Roll forward some months, RC1 is released, but you still have to guess your way through the tooling. If you know the magic sauce, then it will work, but getting there is hard. RC2 comes out, the “DNX” tooling is dead, and there is a newer (better) command line tool “dotnet”. However, the tooling is still pretty raw. It is hard to know if you should use project.json, or xproj. There isn’t a lot of documentation around on it. RTM (1.0.0) quickly follows, but this is just the Runtime. Microsoft are pretty vocal about the tooling being raw.

5 Months later, project.json gets the bullet. Another work in progress in the bin. Swing and a miss.

Finally Visual Studio 2017 is released (and patched, twice) so I take the plunge again. 3 times bitten, twice shy, is that the saying? I have so many beta, RC, RTM tools, SDKs and IDE’s I dont think my laptop would know what to do even if the install was a success. To give this the best chance of success I actually format my disk and re-install windows.

The reinstall goes surprisingly well.

Now I have VS 2017 (15.2) with the .NET Core tooling installed, I can use the dotnet cli tool with ease. I am aware that my flavour of the day CI tool, AppVeyor has this pre-installed too.

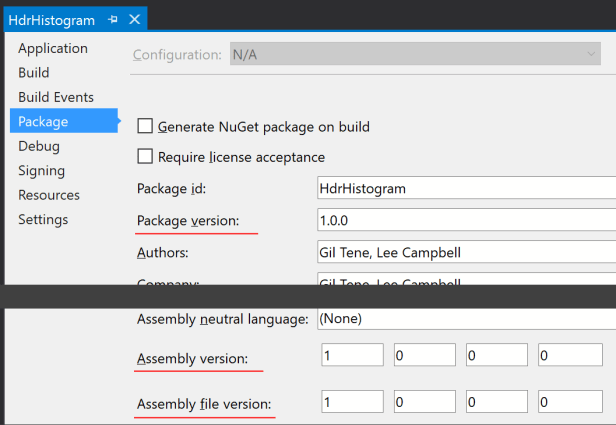

Now in theory to build with dotnet, you need two steps: explicit package restore, then the build. However as per my requirements above, I also need to version the assembly. The AssemblyInfo.cs file seems to be gone these days. So I use VS to set the version from the project config window. I see three places to do this.

Instead of updating the AssemblyInfo.cs, this view now updates the csproj with the new file format. That makes more sense. I like it.

I figure out the hard way that “Assembly Version” and “Assembly file version” are overrides for “Package version”. Setting the “Package version” will set these as you would expect. “Package Version” can accept semantic versioning values e.g. 1.2.3-beta, and things seem smart enough to know to set the Assembly versions to 1.2.3.0.

Then find out that you can set these values from MSBuild. As they are defined in the csproj file now, it is fair game. Finally.

Hold on, we are evaluating dotnet cli, not MSBuild. Well as it turns out, the dotnet cli tool actually sits on top of the MSBuild tools. This means you can use the command line args it expects, but also it can pass on MSBuild command line arguments too, like setting properties of a csproj file. Now unlike Cake with Powershell, I am not really exposed to MSBuild in the way I don’t like, just the way I do like 😀

Now it is not all plain sailing, I have some hiccups trying to get NUnit to work, the Version thing threw me for a while, and I didn’t realise that you can build solutions not just projects. Then multi-targeting was an odd one too. First you need to know the magic strings for the Target Frameworks, and then you need to know to edit the tag to add an “s” to pluralise it. No tooling for that yet. Oren explains it here. For me I add

<TargetFrameworks>net45;netstandard1.3</TargetFrameworks>

and

<PropertyGroup Condition="'$(Configuration)|$(TargetFramework)|$(Platform)'=='Release|net45|AnyCPU'"> <DocumentationFile>bin\Release\net45\HdrHistogram.xml</DocumentationFile> </PropertyGroup> <PropertyGroup Condition="'$(Configuration)|$(TargetFramework)|$(Platform)'=='Release|netstandard1.3|AnyCPU'"> <DocumentationFile>bin\Release\netstandard1.3\HdrHistogram.xml</DocumentationFile> <DefineConstants>RELEASE;NETSTANDARD1_3</DefineConstants> </PropertyGroup>

So finally I think I have something working.

First restore my packages. To be honest, it would be good if I didn’t have to do this manually. I think building implies getting the dependencies.

dotnet restore

Now this assumes you have dotnet on your path, and you are in the directory that has the solution.

Next compile in release mode, with the correct version and output the XML docs.

dotnet build -c=Release /p:Version=1.2.3-beta

See here how we are using the MSBuild “/p” switch to provide the version. “Package Version” on the GUI ends up being a tag in the csproj file.

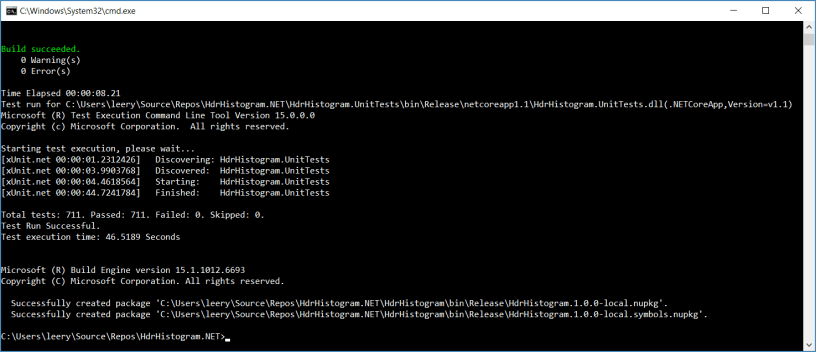

Next, I want to test the code I just compiled

dotnet test .\HdrHistogram.UnitTests\HdrHistogram.UnitTests.csproj --no-build -c=Release

Note here I use the built in test feature of dotnet cli. My project has defined the XUnit libs and runner. My script just has to invoke the intent to test. Also as the code is already compiled, I issue the –no-build switch. I do still need to specify which configuration I want to test, as this is how it will find the dlls to actually test. This is looking slick.

Finally I need to package up the dll, xml docs and the symbols. This normally required a Nuspec file and Nuget available on the path. No more.

dotnet pack .\HdrHistogram\HdrHistogram.csproj -c=Release --include-symbols --no-build /p:Version=1.2.3-beta

Here I set the version, ensure it picks up the release build and the symbols and again we don’t need to build as it was just done.

Let’s put that all together in a script.

dotnet restore

dotnet build -c=Release /p:Version=1.2.3-beta

dotnet test .\HdrHistogram.UnitTests\HdrHistogram.UnitTests.csproj --no-build -c=Release

dotnet pack .\HdrHistogram\HdrHistogram.csproj -c=Release --no-build --include-symbols /p:Version=1.2.3-beta

:clap:

Well done Microsoft. That was a long wait, but it was worth it.

I don’t know if that even qualifies as being called a build script anymore. It seems too basic to be called a script.

I think it is fair to say that I will be ditching PowerShell and PSake as my build tools for libraries. Cake, I think I will be seeing again sometime in the future especially for web/application deploys.

Links:

- https://docs.microsoft.com/en-us/nuget/schema/msbuild-targets

- https://oren.codes/2017/01/04/multi-targeting-the-world-a-single-project-to-rule-them-all/

- https://github.com/dotnet/docs/blob/master/docs/core/tools/index.md

- https://github.com/dotnet/templating/wiki/Available-templates-for-dotnet-new

- https://github.com/dotnet/docs/blob/master/docs/core/tutorials/libraries.md